Link building is the process of getting other websites to link to pages on your site. Links from high authority sites carry a lot of value.

However, it’s not always easy to obtain a link from authoritative websites.

Although these links are hard to get, they can hugely impact your organic traffic and the good news is that the internet is literally bursting at the seams with great link opportunities, you just need to know how to find them.

You’re probably asking yourself “But how do I find these link opportunities?”

Well, fear not, because we’ve got you covered!

In this post, we’ll take you through 5 really easy link prospecting methods, that you can use to find hundreds of link opportunities and start implementing into your link building strategy right away!

Here’s everything that we’ll cover:

1. The Snowball Method

In 2022, while working on a particularly tricky link building campaign, we invented the “The Snowball Method”.

The snowball method is like link prospecting on steroids and is a really easy way to get a lot of new link prospects, especially when your service/product is in less popular niches.

Finding link opportunities is easy when you’re in a popular niche such as marketing or sales. However, if you don’t find yourself in that bucket you’ll have to be a bit more inventive.

For example, if you’re looking to build links to a page about web hosting it’s not a niche that’s covered a lot, so you’ll run out of potential prospects after you’ve found a couple of hundred.

You might find listicles such as “Top 10 best web hosting providers 2023” (which are ideal for link building) but you will quickly run out of these opportunities due to the nature of the niche.

With the snowball method, you need to identify shoulder niches in the industry you are in.

As mentioned previously if you’re looking to build links to a page about web hosting your shoulder niches could be sites that talk about:

- How to start a business

- Affiliate marketing

- How to start a blog

Why?

Well if you’re setting up your own business, starting a blog or doing affiliate marketing, there is a high chance you will need a web hosting solution for your website that will keep your content secure when it reaches your customers.

So how do you find these sites?

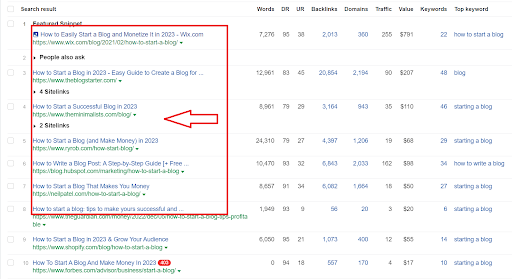

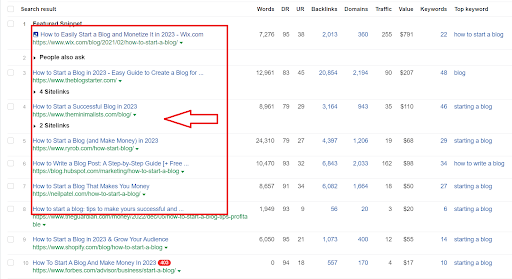

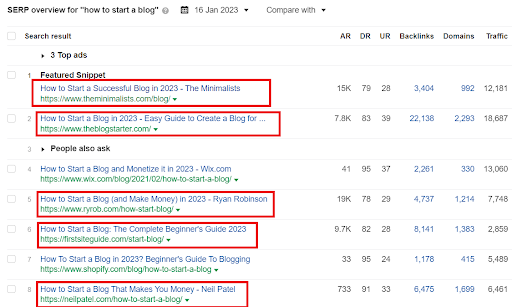

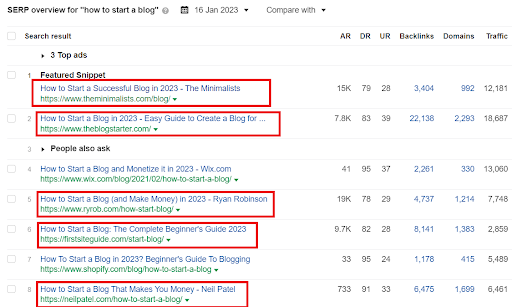

Go to Ahrefs > Keyword Explorer > Type in your keyword/phrase > Export SERP overview

At the bottom of the page, you will find a ton of websites you can reach out to for a link-building collaboration. Remember!

If you’re doing outreach it’s important to ensure you’re not emailing your competitors so do a thorough check of the sites you’re choosing.

Once you have compiled your sites from your SERP overview we can dive even deeper to find more link prospects.

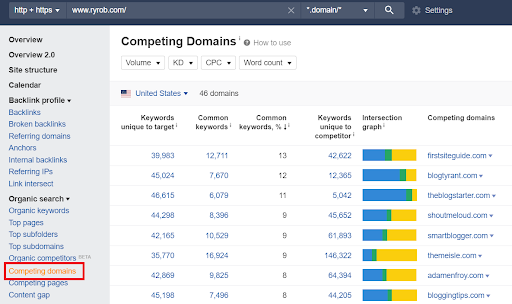

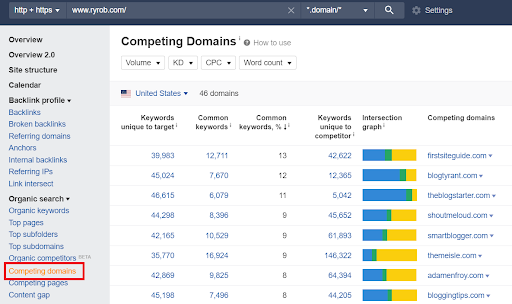

Choose a website from your SERP overview list let’s say for this example, I will choose the website ryrob.com

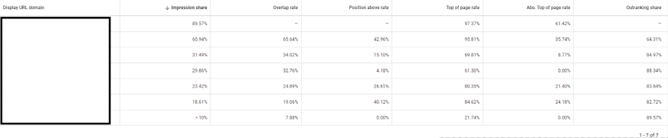

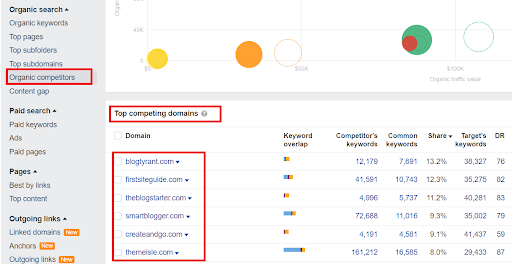

Go to Ahrefs > Site Explorer > Input your site > Competing domains

In the competing domains section, you will find sites that ryrob.com is competing with and you can use these websites to analyze their keywords. This will help you find more keywords you can use to find even more relevant sites that might link to your web hosting page.

How do you find these keywords?

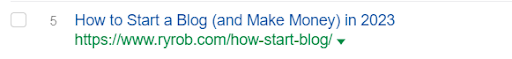

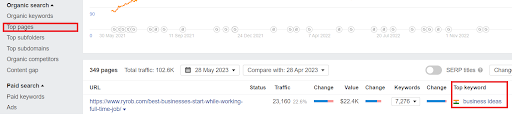

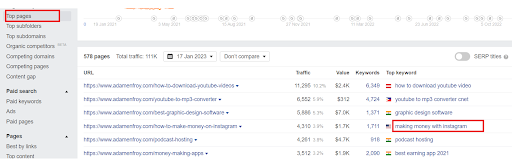

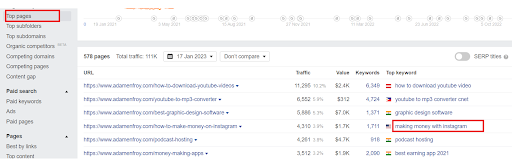

Pick a website from the competing domains section for this example I will choose adamenfroy.com

Go to Ahrefs > Site Explorer > Top Pages > Top Keyword

Look at the website’s top keywords based on their top pages. One of this website’s top keywords is “making money with Instagram” there is a high chance that if you want to make money through social media you will need a website link that your followers can use to learn more about your offer.

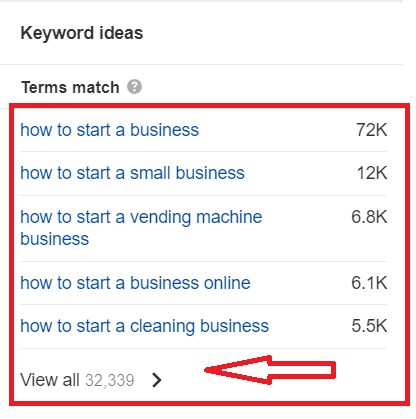

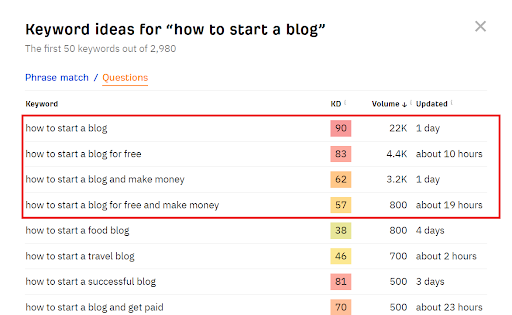

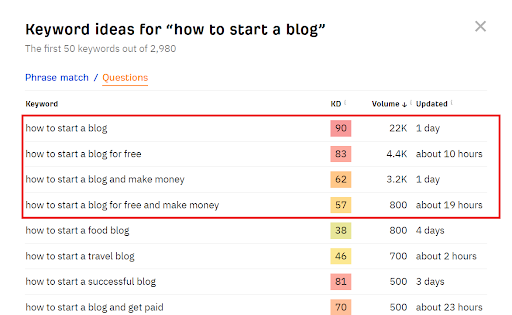

If you’re ever stuck for keyword ideas use Ahrefs free keyword generator.

You will find thousands of keyword ideas in seconds based on a phrase or question. The questions are a great way to see what people are searching for. Take note of the relevant keywords/phrases and input them into keywords explorer to find more link opportunities.

The beauty of the snowball method is that you will find infinite link-building opportunities for your niche.

Once you’ve identified the posts you want to get mentioned on, start building a list of the appropriate contact names and email addresses, then reach out to start building the relationship.

2. Unlinked Brand Mentions

Unlinked brand mentions are mentions of your brand on other websites that do not provide a link back to your site.

This is a fast way to pick up links as they’ve already mentioned your brand, so may be more open to adding a link.

So, how do you find these pages?

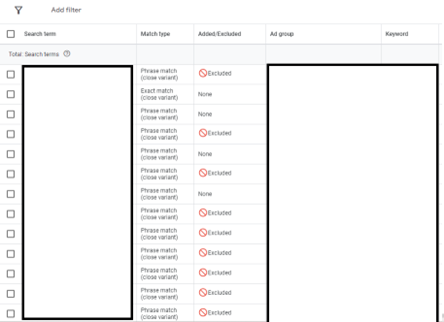

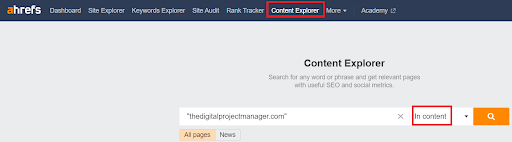

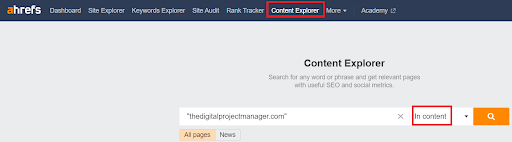

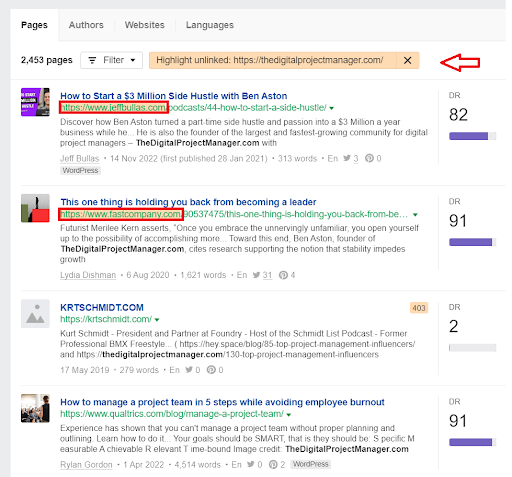

Go to Ahrefs > Content Explorer > Type your keyword in quotes > Choose in content from the dropdown menu

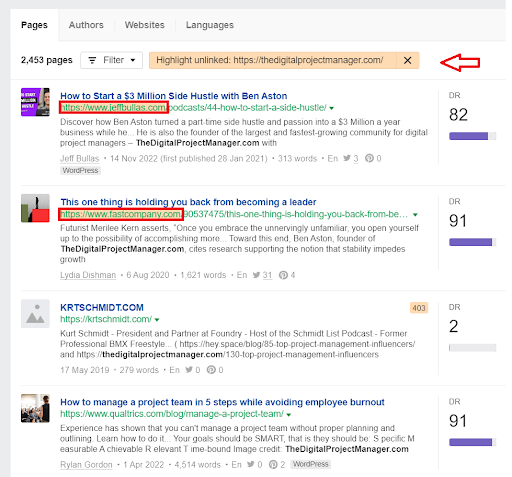

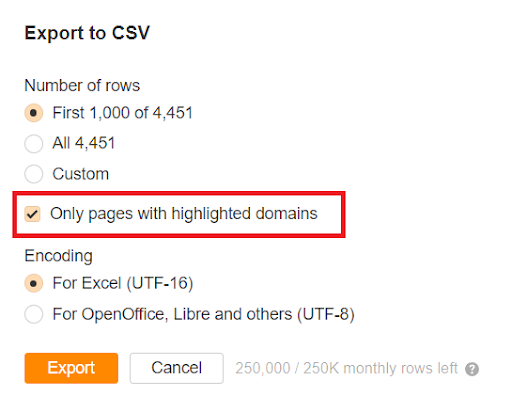

Select the highlight unlinked filter, it highlights the pages that mention your brand but don’t link back to your website.

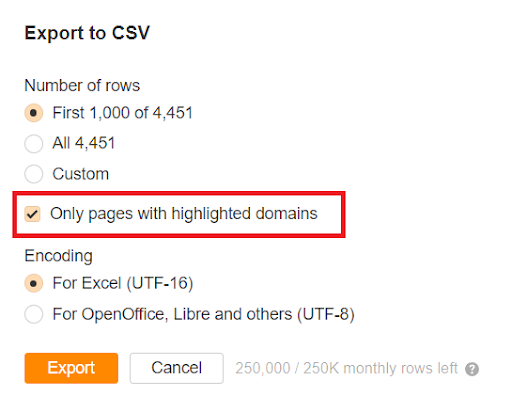

Export to a CSV file, and make sure you export highlighted domains only. This will ensure you’re looking at the right websites and not wasting time.

Filter your prospect list after exporting it. You might have hundreds or thousands of mentions to sift through but not all of them will be relevant. Some of them might be 404 pages or some might already link to your website.

Be prepared, this manual work is tedious but it’s worth it in the long run. The last thing you want to happen is to ask someone to link to you when they already do, it will just annoy them and make you look unprofessional.

Focus on the highest-value mentions after you have filtered your list.

Why?

An authoritative website carries more weight than links from less-reputable sites. As a result, it can strengthen your page authority and thus increase your rankings.

It’s important to personalize your outreach email so the individual opens it.

So, how can you personalize your email?

- Use the individuals name in the subject line

- Keep the email short and concise (no waffling)

- Mention something about the blog/article that resonated with you so they know you’ve read it

- Offer them something in return (e.g. a collaboration/affiliate program)

Link building is about building relationships as much as it is about building links, so make sure you’re always willing to provide value to the other party.

What happens if you reach out and don’t hear anything back? Don’t give up!

Make sure to send at least two follow-up emails as the person might have missed your previous email.

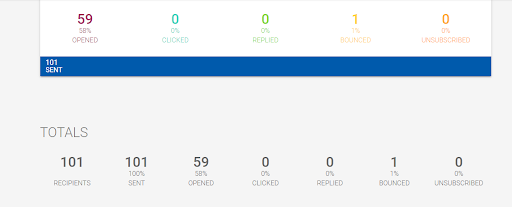

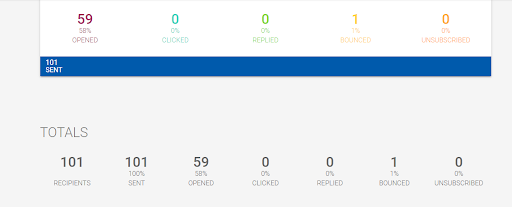

Research shows that the average worker receives 121 emails per day. Therefore, personalisation is crucial for your email to stand out and thus have a higher chance of being opened. If you don’t want to send your emails manually you can use a tool like Mailshake.

Mailshake is a simple email outreach and sales engagement software. It has all the analytics you need to monitor opens, replies, and clicks for every email you send.

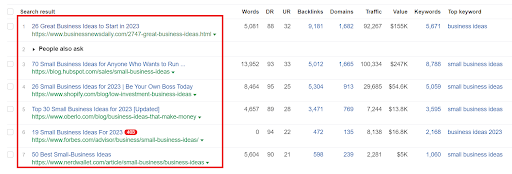

3. Listicle Outreach

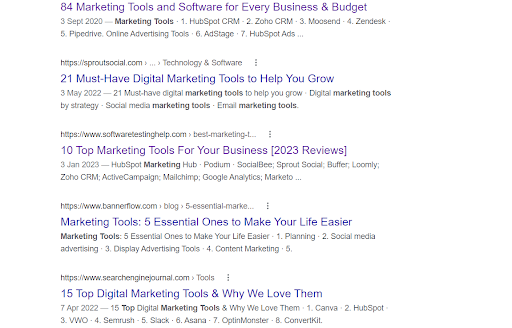

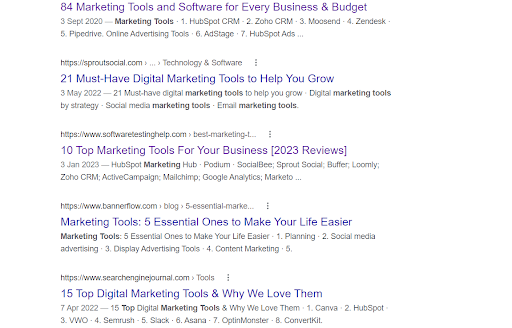

Listicles are blog posts or articles written in a list-based format. This sort of link building may get you extra visibility and referral traffic from websites that already rank well for keywords such as:

- Best X tools

- Top 10 X tools

- Top products for X

If your product/service is in a popular niche such as marketing or sales you will find endless opportunities.

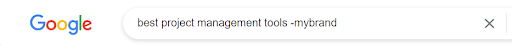

Exclude the sites that already mention your brand when doing your search by using minus. Insert the minus in front of your brand’s name to exclude any pages containing that keyword within its content.

This will make the process easier to manage.

Once you compile your list of sites it’s time to do the outreach. If you can’t find someone’s email address there are two things you can do:

- Use Hunter or similar tools to find a person’s email address if their name is mentioned in the article/blog

OR

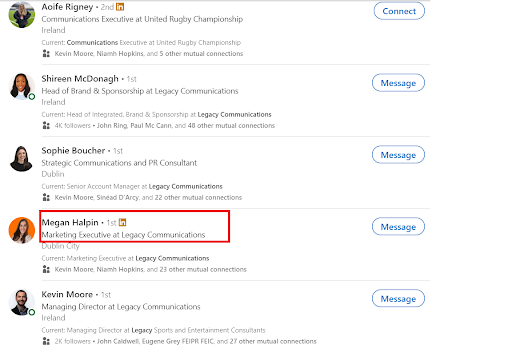

- Use LinkedIn to find a point of contact if no name is mentioned

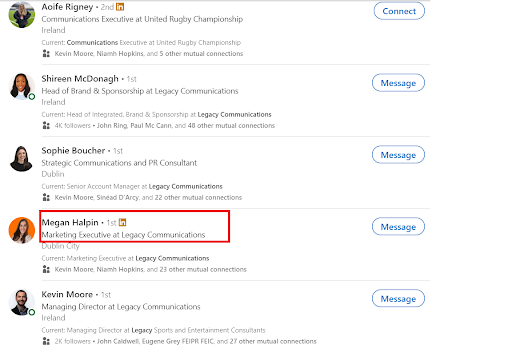

Go to Linkedln > Type the company’s name > Click on people

If you want to be added to someone’s website look for people working in Marketing.

They will be your window of opportunity.

As mentioned previously, ensure your email/message is personalized and you’re being transparent about what you want.

Remember! If you confuse them you lose them!

If the marketing manager approves your link request it’s important to ensure your content matches the existing content already on the page.

What does that mean?

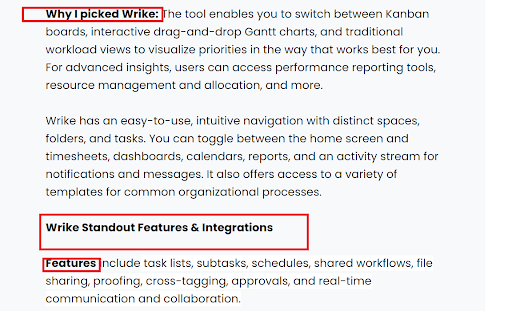

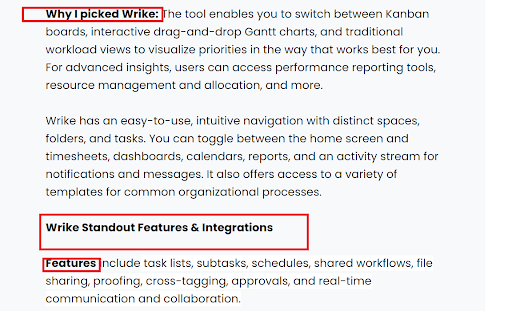

Each listicle page is designed differently when it comes to its format. The listicle example below is from the site Digital Project Manager if you were going to write a blurb for them you must include the headings they are using. If not your content will look unnatural. Always analyze the format of the page before writing your content.

4. Digital PR

You know that saying: “Don’t wait for opportunity. Create it.”

Link-building is all about being innovative and taking action when an opportunity presents itself. Digital PR is a great way to get links from high-authority sites and boost organic traffic. Digital PR is about creating linkable assets that journalists will be eager to share with their audience. There are many different PR frameworks you can use:

- Reactive and Proactive

- Product PR

- Data led/Data Stories

- Thought Leadership/Expert Led PR

- Creative Campaigns or Stunts

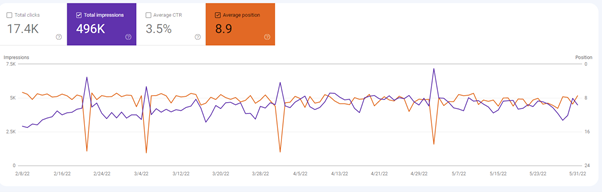

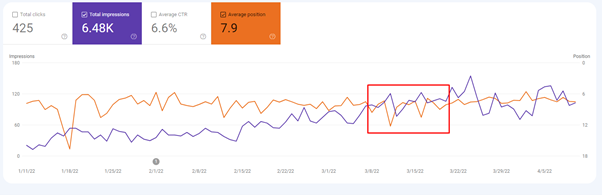

We use Digital PR for our clients and for our own brand as well.

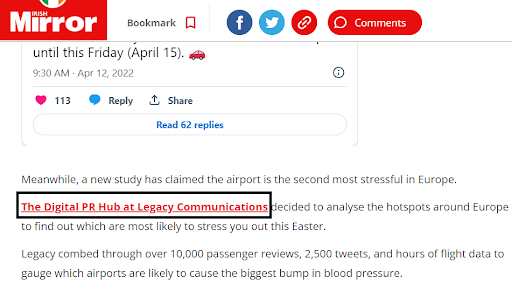

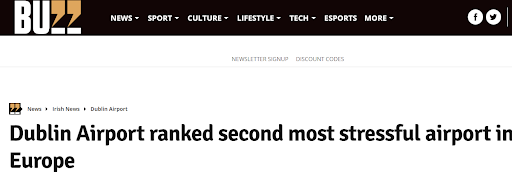

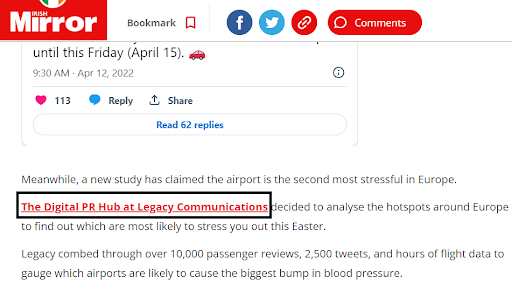

For example, we published a data-led story that revealed which airports are the most stressful.

For Legacy’s Airport release, our Digital PR team used data from a variety of sources including flight-tracking apps, airport ranking sites, and sentiment analysis tools.

Tapping into a topic that was already trending right before the Easter travel season began.

We secured 114 pieces of strong coverage in national, regional and broadcast publications worldwide, with features in publications such as:

- Irish Examiner

- Breakingsnews.ie

- Irish Mirror

- Standard.co.uk

- Independent

- Her.ie

But wait, there’s more…we also secured:

- 40 links to our website

- 400% increase in referral traffic over two days

- 160 brand mentions

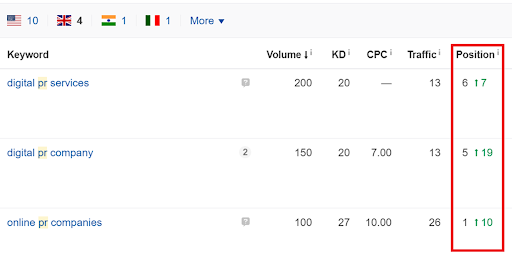

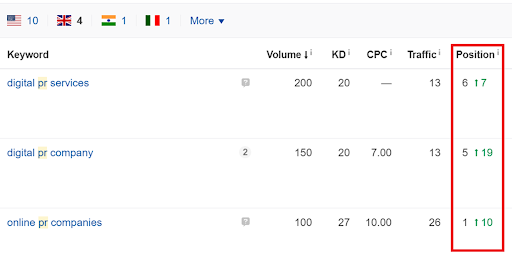

Also Legacy went from ranking at number 7 to number 6 for the term Digital PR service in the UK, as well as moving from ranking in nineteenth place to fifth place for the term Digital PR company, and from 10 to number one for the term online PR companies.

Meanwhile in the U.S., Legacy went from ranking in twelfth place to fifth for the term Digital PR agency, number 7 to number 5 for digital PR services and 10 to 3 for Digital PR service, also moving from 0 to 6 for Digital PR firms and 18 to 4 for Digital PR companies.

5. Guest Posts

Guest posting is the act of writing content for another company’s website. It’s a great way to acquire links and attract traffic back to your website.

But I thought guest posting was dead? The truth is that this method still works.

When writing guest posts make sure you use the synonym method.

What is the synonym method?

Ryan Stewart from Webris showed us the synonym method and we’ve been using it for years.

The synonym method is all about finding people/sites that are in a related niche but aren’t your competitors.

For example, if you offer a home painting service you would not be reaching out to other painters in your local area to be featured on their blog. As you are competitors, they would quickly tell you where you could stick your guest post.

So instead you would reach out to websites in shoulder niches, EG:

- Real estate agents

- Roofers

- Pest control companies

Real estate agents would be a good group to pitch your guest post ideas to.

Why?

Well, real estate agents want to sell as many properties as possible. If you’re a painter you could pitch the following topic ideas:

- 3 paint colours to sell your home faster

- 3 paint colours that increase the value of your home

- Which colours to use for home staging

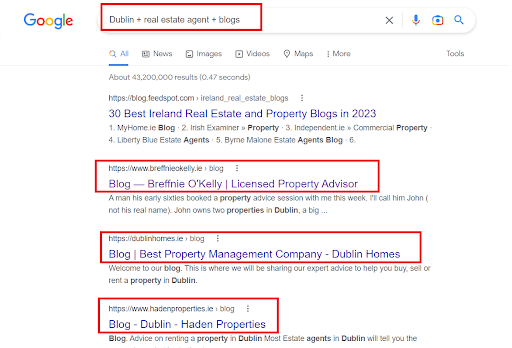

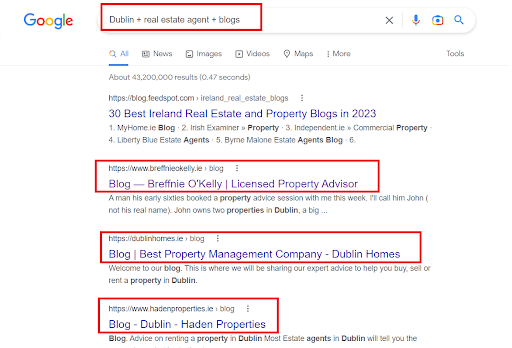

How do you find these local blogs?

Use search operators such as:

- City + keyword + blog

- City + keyword + blogs

- City + keyword + bloggers

Once you compiled your list of sites make sure to mention in your email why you want to write a guest post for their site.

Reasons could include:

- You want to provide educational content their readers will find valuable

- You want to share your expertise with their readers

Remember link-building is about reciprocity and buildings a relationship, so always provide something in return whether it’s:

- A link

- Mentioning their brand in your next newsletter/blog

- Mentioning their brand on your socials

- Offering an affiliate program

Happy Linking! 🔗

If you’re interested in taking your link-building to the next level in 2023, get in touch with our amazing Search team who will work with you to move your business up the rankings and help you find link opportunities.

Why it worked?

Why it worked?